Now you see it, now you can’t

Monday | June 21, 2010 open printable version

open printable version

DB here:

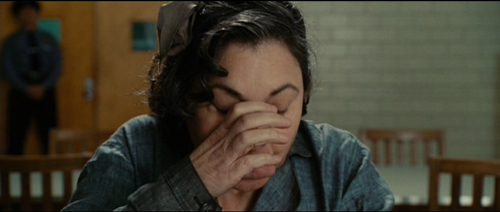

We usually respond to films spontaneously, but afterward we can think about our responses and figure out why we reacted as we did. When we’re fooled by a mystery, for instance, we can re-watch the film and trace exactly how we were misled. Now that Shutter Island is out on DVD, fans will be dissecting its visual gimmicks. Even when a film isn’t a mystery, a lot of critical analysis involves what we might call a rational reconstruction of how the whole shebang works. Novice screenwriters crack open a movie like The Apartment or The Godfather to peer into the fine mesh of plot construction, to tease out all the setups and plants and twists that seem inevitable only after the fact.

This is film research, we might say, at the personal level. Not in the sense of your or my unique identity, but rather at the scale we see the world. We don’t see atoms or gravity. We evolved to sense and think about middle-sized social and physical phenomena, like places and objects and, especially, other humans. We’re aware of the world because we sense ourselves as individual agents, guided by intentions and desires and beliefs. We’re used to talking about films at this level. When we track action and character, note surroundings and time passing, or ask about the purposes of a plot device or theme, we are working at the level of personhood.

But life assigns us to other levels too. There is the subpersonal level. All kinds of things are happening to you now that you can’t be aware of. You can’t watch the cells in your retina detect this sentence, or the neurons in your brain firing to make sense of it, or the flow of signals to your hand urging the mouse to scroll onward. A huge amount of our mental activity takes place behind the scenes that flit through our consciousness. We can’t pay attention to the man behind the curtain—partly because there is nobody there.

There’s also the suprapersonal level, the level of collective behavior patterns. Now we’re talking about people as parts of large-scale forces, like groups and cultures and societies. Historians have traditionally worked at this level. For example, some researchers have traced how film audiences, en masse, have responded to movies.

More strikingly, many scientists now study “self-organization”—the emergence of patterns of order that don’t seem to be willed or intended by individuals or groups. We find impressive instances in nature: fish swim and birds flock in intricate patterns that no one fish or bird could imagine or dictate. Such self-organization is even more striking in human activities like traffic flow or online networks. Is there a sort of “physics of society”? No doubt people have intentions and quirks, but often we can bracket those out and see shapes in the data that no one could have designed. The classic instance is a power law. A remarkable example is Vilfredo Pareto’s discovery that income distribution in any society tends to settle out as 20 percent of the people controlling 80 percent of the wealth. Mark Buchanan sums up the suprapersonal viewpoint this way: “Think patterns, not people.”

We know we can study films at the personal level. How can we study films subpersonally and suprapersonally too?

Reverse-engineering a movie

Mon Oncle.

My answer comes after four days earlier this month at the annual convention of the Society for Cognitive Studies of the Moving Image. We met in Roanoke, Virigina, in a massive nineteenth-century hotel made over into a convention center. You know the place has things under control when every PowerPoint presentation works flawlessly.

What ideas unite the film scholars, psychologists, and philosophers who gathered here? Roughly, the members explore moving-image media through empirical methods. Empirical inquiry can include classic scientific method (hypothesis/ experiment) or methods of aesthetic, historical or quantitative analysis. Typically the goal is not interpretation of a particular film or TV show or videogame but rather understanding of some general aspects of these media. Not explication, we might say, but rather explanation. Further, most members of the Society are interested in ways that film can be illuminated by areas of modern psychological research, such as neuroscience, cognitive science, and evolutionary psychology.

Some of our philosophers would say that they pursue conceptual analysis rather than empirical inquiry. Still, they join our meetings because the sorts of concepts they want to analyze are the ones that the film folk and the psychologists deploy—concepts like artistic intention or the nature of genre. Many of our liveliest sessions have come from disputes between Filmies, Psychos, and Philosophes.

For more on what we do, I’ve offered some background in earlier entries on this site. I previewed the 2008 convention here and here. I previewed the 2009 convention here and here.

Previews, but not followups. The big problem covering these events is that they’re so busy I have no time to blog during them, and by the time they’re over I’m usually en route to Il Cinema Ritrovato in Bologna. So I tended to make those entries introductions to the cognitive perspective, rather than surveys of who said what. This year, because our gathering was earlier than usual, I’m trying to sum up the event reasonably soon after it ended. We had simultaneous sessions, sometimes three at once, so I attended fewer than half of the talks. I’ll try to mention presentations that seem relevant, even if I didn’t hear them. Similarly, I heard some presentations (e.g., Lisa Broad on possible worlds) that were stimulating but don’t quite fit into my thesis here.

There were plenty of talks that developed arguments at the level I called personal. That is, they analyzed how films were designed to achieve certain effects. This calls for “reverse engineering”: starting from plausible viewer responses and then looking for creative choices made by the filmmakers that seemed to fulfill particular functions.

Take as an example Carl Plantinga’s paper on how we strike up moral attitudes toward characters. He was interested in how we achieve what Murray Smith calls “allegiance”—a “pro-attitude” toward certain characters. Is it just a matter of wishing good things for them, or admiring their positive traits? Is it a matter of sympathy for their situation?

Take as an example Carl Plantinga’s paper on how we strike up moral attitudes toward characters. He was interested in how we achieve what Murray Smith calls “allegiance”—a “pro-attitude” toward certain characters. Is it just a matter of wishing good things for them, or admiring their positive traits? Is it a matter of sympathy for their situation?

Carl argued that all these factors play a role, but they aren’t enough to assure our siding with a character. He argues that allegiance also involves moral judgments, or rather moral intuitions. Films exploit two facts about these moral intuitions: they must be summoned up quickly, without much thought, and they are often driven by emotion rather than ideas.

Oddly enough, our moral intuitions are not necessarily driven by moral standards! Carl drew on Anthony Appiah’s analysis of moral judgments as influenced by how a situation is framed, ordered, and primed—basic cognitive cues that influence responses. For example, Legends of the Fall sets up two brothers, one conventionally moral and the other not. Yet it’s the wild, violent Tristan who earns our sympathies, because he displays vitality, youth, beauty, sensitivity, and closeness to nature. The upshot is that we rationalize a moral judgment on non-moral grounds. Carl got several questions about his conception of morality and the possibility that our moral intuitions are tied to things we value, like beauty.

Malcolm Turvey offered a paper on gags in Jacques Tati. Pointing to prior work on how Tati’s gags are integrated into a shot’s composition (scattered so that we may miss them) and linked to one another (through overlap, Kristin has suggested), Malcolm went on to argue that the gags themselves don’t obey the conventions of classic comedy. They exhibit strategies of misinterpretation, blockage, ellipsis, fragmentation, and concealment that are highly original and unusually challenging. Malcolm is working on a book on “ludic modernism,” and he sees Tati as fitting into this tradition.

Malcolm’s precise and persuasive account was framed by a strong attack on the tendency of cognitive film theory to concentrate on ordinary, even undistinguished films and ignore problematic and avant-garde instances. He pointed to passages in Stephen Pinker’s writings that mock experimental art, and he urged cognitive film researchers to “call Pinker out” for his borderline Philistinism. He remarked that psychological researchers could learn as much from Tati’s work as from ordinary films…and maybe discover new things.

A similar sort of rational reconstruction at the level of personal response was found in many other papers I heard. Jason Gendler dissected the misleading narration in The Blue Gardenia. Rory Kelly wondered why viewers tend to forget the water-utility plotline at the start of Chinatown. James Fiumara considered why modern startle effects are comparatively rare in classic horror films. Torben Grodal isolated a group of “disgust-driven phobic films” (Taxi Driver, Blade Runner, Se7en) that sink so deeply into disgust that melancholy drives out empathy. Lennard Hojberg studied circular camera movements that express the dizziness of love as based on embodied vision.

A similar sort of rational reconstruction at the level of personal response was found in many other papers I heard. Jason Gendler dissected the misleading narration in The Blue Gardenia. Rory Kelly wondered why viewers tend to forget the water-utility plotline at the start of Chinatown. James Fiumara considered why modern startle effects are comparatively rare in classic horror films. Torben Grodal isolated a group of “disgust-driven phobic films” (Taxi Driver, Blade Runner, Se7en) that sink so deeply into disgust that melancholy drives out empathy. Lennard Hojberg studied circular camera movements that express the dizziness of love as based on embodied vision.

Some researchers used quantitative procedures to capture a film’s regularities. Monika Suckfuell (right) exposed some very complex patterns in a short film, Father and Daughter. These create a distinct emotional tone through what she calls “distance editing.” The patterns are combinations of thematic units like problem-solving or humor, and the recurring combinations arouse both comprehension and pleasure. In another quantitative study, Tseng Chiaoi and John Bateman sought to tie concrete uses of filmic elements with more abstract aspects of meaning. (Chiaoi and John had done a presentation on film-based discourse semantics at last year’s event.) Concentrating on characters’ action patterns, Chiaoi used computer software to trace their structures in television commercials and the war genre.

Squeezing the stimulus

Bopping after a session: Tseng Chiaoi and Paul Taberham.

The talks I’ve mentioned, along with several others, analyze processes that we can access by re-viewing films, studying their form and materials, and examining the genres and stylistic traditions to which they belong. But other talks concentrated on the subpersonal areas, the parts of our responses that we can’t access so easily.

For instance, how do we mentally stitch together various shots to create a unified space for a scene’s action? Film scholar Todd Berliner and psychologist Dale Cohen presented an account of how we achieve an illusion of spatial continuity. Initially the brain grasps shots as if they were pieces of space selected by the viewer, and then it builds up a model of the whole space—say a porch in front of a house’s front door. When a character moves his arm a certain way toward an offscreen area, our model of porches and doors makes it most probable that he is pressing the doorbell.

For instance, how do we mentally stitch together various shots to create a unified space for a scene’s action? Film scholar Todd Berliner and psychologist Dale Cohen presented an account of how we achieve an illusion of spatial continuity. Initially the brain grasps shots as if they were pieces of space selected by the viewer, and then it builds up a model of the whole space—say a porch in front of a house’s front door. When a character moves his arm a certain way toward an offscreen area, our model of porches and doors makes it most probable that he is pressing the doorbell.

In such ways we run “beyond the information given.” Filmmakers count on our doing that, so they give us feedback (say, the sound of a doorbell, or a shot of a door opening) that confirms our model of the space. Most mainstream films build in such redundancy. But the unity of these spaces is undermined by some contemporary exhibition technology. Todd and Dale suggested that the mental models we build of space exclude the movie theatre, so that surround sound and 3D become problematic.

They also got several good questions. How concretely specified are these model spaces? How do they develop in the course of a scene? Might our perception of continuity come down to a lack of perception of discontinuity—that is, maybe we operate on very simple default assumptions and don’t build up many models of the space.

Todd and Dale’s presentation was in the Helmholtz tradition; they even invoked “unconscious inference” as part of the story. Another tradition, represented by SCSMI founders Joseph and Barbara Anderson, invokes James J. Gibson’s ecological approach to perception, which argues that such modeling and inference-making isn’t really happening. Things are much more direct: Perception is data-driven, and needs top-down correction only in rare cases (like nighttime or fog).

Other perceptual researchers try for a more parsimonious research strategy: How much information about the visual world can we squeeze out of the stimulus? This question was raised by Jordan DeLong, who has been exploring how we can identify emotional arousal through very “low-level” information. Using a corpus of 150 films (more on this later), he looked at shot lengths, the distribution of shot-lengths across a film, and a purely physical measure of visual activity (essentially the change from frame to frame) to see if they correlate with genres we associate with high levels of arousal, like action and adventure films. Jordan’s study is preliminary, but there is the possibility that certain purely physical features are reliable indices to levels of arousal—even if people don’t notice those features and are much more fastened on characters and their actions.

The current projects of Tim Smith’s research team exemplify the parsimonious strategy. Tim is a long-time participant in SCSMI, and his talks show how a research program can expand and enrich itself.

We know people look at certain areas of a shot. We also know that our attention is directed, driven by features of the stimulus. What features? We filmies would pick out shot composition, color, movement, lighting, shot scale, etc. We can access those middle-level variables through expert introspection and analysis. But can those features be further decomposed?

Tim thinks so. We can consider any of these technical qualities as made up of luminance, color channels, and other low-level physical aspects of vision. Through signal-detection methods, Tim seeks to pinpoint what the crucial variables are. The results of his work are soon to be published, and I don’t want to give the game away. I’ll just say that he has shown through eye-tracking that certain low-level features are more important than others in engaging viewers’ attention.

One implication of Tim’s findings is that what I’ve called “intensified continuity” seems to have an optimal grip on spectators. This technique, he remarked, “almost paralyzes the eyes,” yielding “an illusion of active vision with passive eyes.” More generally, his work seems to back up James Cutting’s remark that “There is no such thing as voluntary attention sustained for more than a few seconds at a time.” Most of our attention is at the mercy of the outside world, which means that filmmakers need to engage us at every moment—either with narrative, or with something else we’ll find arresting.

Dan Levin, Pia Tikka; in the background William Brown, Carl Plantinga, and Dirk Eitzen.

Dan Levin gave the keynote address at SCSMI two years ago, and he showed how we vastly overrate our ability to spot outrageous changes in the world or on the movie screen. (He’ll have a field day with the tricks in Shutter Island, such as the one surmounting this blog.) This time around Dan talked about Theory of Mind in the movies, the ways that films exploit our (species-specific?) inclination to attribute beliefs, desires, and goals to the creatures we see in the world, and in films. His paper scanned mandatory, bottom-up cues, middle-level activity (the sort of organization of visual space Todd and Dale discussed), and then “controlled cognition,” such as our narrative expectations. So for Dan it’s not all in the stimulus. Once we’ve picked out certain aspects of it, our Theory of Mind system locks on them.

What aspects? Chiefly, signals of intention and marked eye direction. When we think we’re watching an intentional agent, like a movie character, we tend to see the agent’s eye direction as giving us a clue to his or her aims. Using films he has made, Dan tests people for how eyeline-matched cuts are read, and he varies the cues to see how people construe them differently.

Interestingly, when he showed the same shots in a different order, about two-thirds of the subjects didn’t notice that the order was different. That is, whether the object of the glance or the person glancing came first, gaze deflection remained the primary cue to understanding the situation. This suggests to me that storytelling cinema doesn’t absolutely need the classic pattern of person looking/ person looked at/ person looking, but the extra shot makes sure we all understand (redundancy again).

A similar sort of top-down/ bottom-up theory was proposed by Dan Barratt, who gave it a more computational spin. Like Tim, he works on eye movements; like Todd and Dale he’s interested in how we construct space; like Dan, he seeks out intentional factors. It’s not all in the stimulus, but we won’t know how much until we keep squeezing.

Ripples in the flow of films

The Society broke new ground in what I called the “suprapersonal” realm, that of large-scale patterns of activity that aren’t explicitly coordinated by individuals or groups. Several researchers are probing this in relation to films. Films are, in effect, deposits of human behavior; they are artifacts resulting from choice. What if we find patterns of choice that we can’t plausibly trace to coordinated decision-making?

Chris Atherton raised the issue by considering how to study style statistically. Citing Barry Salt as a pioneer in the area, he focused on the work of the Cinemetrics group and offered suggestions on how to better collect data and track patterns at various levels of generality. More sharply, he posed the question of function. How can you measure that? One implication was that in a big body of films we can disclose order that can’t wholly be explained as the sum of individual choices. Those choices matter, but so do forces we have yet to determine, including historical processes. Chris’s reflections chimed nicely with those of our keynote speaker.

James E. Cutting is a distinguished perceptual psychologist at Cornell. He’s written a fine book on motion perception and has done a quantitative historical study of the creation of the canon of French Impressionist painting. He’s a former dancer and a sensitive appreciator of art and music (and film). He’s the ideal person to analyze ticklish aesthetic issues.

You may have run across him recently because his research on Hollywood films was picked up and trumpeted in the press. “Solved: The Mathematics of the Hollywood Blockbuster,” read one headline. Needless to say, James was doing something more subtle. You can read summaries here and here.

In his lively address, “Attention, Intensity, and the Evolution of Hollywood Film,” James explained two of his areas of interest: the ebb and flow of change across a film, and how that change is tied to human pickup. James studies these topics with a big database: 150 movies from 1935 to 2005. He emphasizes widely seen films belonging to five genres and chosen from the highest-rated titles on IMDB. But there’s a micro- side too. He and his research team went through every film frame by frame coding each one along many dimensions.

Naturally, he takes on the vexed issue of Average Shot Length. You can read the ongoing discussions of this concept on Yuri Tsivian’s Cinemetrics site. What interests James is less ASL in itself than the ways in which comparable patterns of shot lengths cluster in certain parts of the film. A film’s ASL may be 8 seconds, but in some passages several shots might have similar lengths, say 12 seconds each. Moreover, those patterns may “ripple through the film,” recurring at certain intervals and different scales (shot clusters, scenes, or other chunks).

Not every film shows such patterns; film noirs seem random in their patterns of shot lengths. But many movies, especially those of the last fifty years do display these patterns—typically clusters of short shots for physical action, clusters of longer ones for conversations. This clustering tendency is on the increase, even outside the action genre.

Not every film shows such patterns; film noirs seem random in their patterns of shot lengths. But many movies, especially those of the last fifty years do display these patterns—typically clusters of short shots for physical action, clusters of longer ones for conversations. This clustering tendency is on the increase, even outside the action genre.

The finding leads James to ask about the pacing of visual change, which raises the prospect of the sort of tension/ release dynamics we find in music. The patterns he finds don’t look arbitrary because they match the so-called 1/f or pink-noise pattern. This pattern has been detected in the natural world, in heartbeats, and in brain activity. It’s also been discovered in reaction times to tasks. In effect, the 1/f pattern captures not continual attentiveness but rather an alternation of intense concentration, moments of slower pickup, and moments of sheer mind-wandering. A nontechnical explanation of the 1/f pattern is here; a technical one is here.

As for intensity, James is seeking to measure visual activity in the shots. How much change, in effect, can there be from frame to frame? This can be captured by correlating each frame with its mates. James and his team find that from 1930 to 1950, there’s been a steady increase of frame-to-frame visual activity across all genres. The images became busier, with more movement. Today, he suggests, Hollywood is exploring ways to raise the frame-to-frame visual activity—not only through lots of movement of characters (the action film comes to mind) but also through “queasicam” handheld movements.

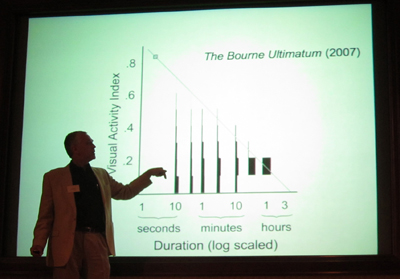

In combination with decreasing ASLs, Hollywood seems to be asking: How briefly can I show you this and still get the point across? So far, James suggests, films like Mission: Impossible III and The Bourne Ultimatum seem to be the busiest at the visual level. But animated films score about the same.

James has a caveat: The size of the screen matters. Even a big home-theatre screen doesn’t duplicate the breadth of a theatre screen, which activates not only our central vision but our peripheral vision too. That’s why bumpy shots that you can tolerate on a computer monitor may make you queasy at the multiplex. Interestingly, the Bourne films and Cloverfield, James suggests, were more popular on IMDB after the DVD versions came out. Perhaps people were better able to assimilate them on a smaller display.

At one level, James is interested in the subpersonal factors. He grants that you don’t notice cuts but can attend to them if you shift your focus from the story. But the widespread patterns he discloses aren’t easy to ascribe to deliberate planning. Nobody but an avant-gardist would decide to have shots of similar lengths at points 14 minutes or 25 minutes apart throughout the film. But James finds such correlations at levels beyond chance.

I can’t pretend to understand everything mathematical in James’ argument, but I think that his discoveries open up a new way to think about pacing in film. My first impulse is to think about historical causes, as Chris suggests: filmmakers learning from each other, converging on optimum choices. But I also like to entertain the possibility that this optimum carries a resonance even beyond the flux of history. Perhaps like fish and fowl, filmmakers are obeying and viewers are fulfilling, completely unawares, deep rhythms built into nature and numbers.

A tangled databank

Traditional humanists would decry a lot of what goes on at SCSMI meetings. The appeal to general explanations, the recourse to biology and evolution, the use of quantitative and experimental methods would all smack of “scientism.” But more and more, humanists are starting to turn away from the endless reinterpretation of canonical or non-canonical artworks. Many are also quietly defecting from the Big Theory that dominated the 80s and 90s. In film publishing, I’m told, editors have come to an informal moratorium on books on Deleuze. Possibly more people write them than read them.

Committed to a theory of permanent revolution in Theory, humanists are seeking new pastures. Some have discovered neuroscience, others evolutionary psychology. Franco Moretti has launched quantitative studies of the literary marketplace. For many converts, the reconciliation with science is just a bandwagon to hop onto, and they will jump off when a newer one trundles past. But other scholars have been committed from early on. The prospect of “consilience,” the compatibility between the sciences and the arts, is something the literary Darwinists like Brian Boyd, Jonathan Gottschall, Joseph Carroll, and like-minded souls were defending long before it became fashionable.

Film theory, as Joe Anderson is fond of pointing out, has a long and intense fascination with experimental psychology. Hugo Münsterberg, Rudolf Arnheim, and Sergei Eisenstein saw no conflict in studying film art with tools and findings derived from the sciences. That interest was lost in the 1960s, for a variety of reasons. But some of us have persisted. An explicitly “cognitive” perspective has been developing in film studies for the last twenty-five years, and SCSMI has nurtured this tradition since 1997. Our commitment is deep. We’re making headway. We’re not going to go away.

There is grandeur in this view of life, and cinema.

Our Society owes a great debt to Stephen Prince of the Virginia Tech School of Performing Arts and Cinema, who hosted this year’s convention. He also gave a splendid paper on the research traditions behind precinematic optical toys, as a way of thinking about modern CGI.

I found these books on suprapersonal patterns helpful: Mark Buchanan, The Social Atom: Why the Rich Get Richer, Cheats Get Caught and Your Neighbor Usually Looks Like You (London: Cyan, 2007); Steven Strogatz, Synch: The Emerging Science of Spontaneous Order (New York: Theia, 2003); Philip Ball, Critical Mass: How One Thing Leads to Another (New York: Farrar Straus Giroux, 2004).

Todd Berliner and Dale Cohen’s paper, “The Illusion of Continuity: Active Perception and the Classical Editing System,” is scheduled for publication in the Journal of Film and Video in 2011. Tim Smith’s paper is in press at Cognitive Computation as P. J. Mital, T. J. Smith, R. Hill, and J. M. Henderson, “Clustering of gaze during dynamic scene viewing is predicted by motion.” You can check Tim’s DIEM project for video demonstrations, and his blog, Continuity Boy.

The original article by James Cutting, Jordan DeLong, and Christine Nothelfer, “Attention and the Evolution of Hollywood Film,” appeared in the March issue of Psychological Science. Access is through subscription.

As for Shutter Island, thanks to Justin Daering for pointing out the double sleight-of-hand. More thoughts on the film and Scorsese’s expressionist/ impressionist tendencies are in our backfile.

1999 Conference of the Center [later Society] for Cognitive Studies of the Moving Image; Valby, Denmark. Photo by Johannes Riis.